Evripidis Gkanias

Research Fellow in Computational Sensory Biology

Lund University

Evripidis is a post-doctoral Research Fellow at Lund University.

He is interested in bio-accurate artificial intelligence and information theory. He currently investigates the effectiveness of the insects’ celestial compass and its adaptation to different environments. Inspired by insect neuroscience, he tries to build sensors and navigation systems that are robust to environmental disturbances.

Scientific questions he is currently working on:

- how the celestial compass adapts to new environments,

- how it is integrated with other visual information, and

- how working memory implements in the brain.

- Bio-inspired Artificial Intelligence

- Neuroethology of Navigation

- Probabilistic Machine Learning

- Reinforcement Learning

- Bio-inspired Computer Vision

- Multimodal Integration

-

PhD, Bio-inspired Robotics & Autonomous Systems, 2023

University of Edinburgh

-

MSc, Artificial Intelligence, 2016

University of Edinburgh

-

BSc, Computer Science, 2013

Aristotle University of Thessaloniki

Recent Publication

Recent & Upcoming Events

Research Experience

Advisors: Prof Marie Dacke.

Project: Adaptive Compass, Funding: Carl Tryggers Foundation.

I investigate of how biological compasses are adapted to different visual environments, with a focus on neuronal adaptations, and develop optimized models of visual orientation mechanisms for different visual habitats.

Advisors: Prof Barbara Webb.

Project: Insect Neuro Nano, Funding: European Research Council.

I explored the effectiveness of different forms of working memory constrained by the biology and nanotechnology hardware, and built an anatomically-accurate polarised light compass sensor and model.

Advisors: Prof Barbara Webb, Prof Subramanian Ramamoorthy.

Thesis examiners: Prof Thomas Nowotny (external), Prof J. Douglas Armstrong (internal).

Project: Insect neuroethology of reinforcement learning, Funding: RAS CDT.

I investigated how insects form associative memories based on reinforcements from the environment and how this impacts their behaviour in the context of reinforcement learning.

University of Sheffield

Advisors: Dr Michael Mangan (Sheffield), Prof Barbara Webb (Edinburgh).

Project: Invisible Cues, Funding: Engineering and Physical Sciences Research Council.

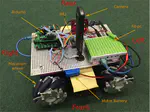

I was responsible for investigating the information content of polarised light in relation to animal navigation before using the outcomes to develop a technical specification / design for manufacture of a novel robot sensor.

Advisors: Prof Barbara Webb.

Project: Minimal, Funding: European Research Council.

I focus on trying to imitate the learning mechanism of the larval Drosophila, which creates associations among odours and tastes. The goal is to create such a mechanism in neural level and put it on a robot platform. The robot will try to find the gustatory source following the gradients of the associated odour.

Advisors: Dr Petros Daras.

Projects: RePlay, FI-STAR, Funding: European Commision.

My main task was to implement a toolbox, using C# and the WPF subsystem, which could be used to analyse and compare human gestures, tracked using different capturing devices, i.e., Microsoft Kinect, Vicon, WIMUs. I also implemented an extension of it, which was compatible with Unity3D.

Teaching Experience

I have been tutoring, demonstrating and marking for a variety of courses including:

-

Introductory Applied Machine Learning: tutor, demonstrator, marker

Instructors: Dr Amos Storkey & Prof Chris Williams

-

Reinforcement Learning: tutor

Instructors: Dr Pavlos Andreadis & Dr Stefano Albrecht

-

System Desighn Project: Machine Vision and Quantitative Analysis expert

Instructors: Dr Steve Tonneau, James Garforth & Prof Barbara Webb

-

Heterogeneous Parallel Programming: I was responsible for answering students' questions regarding the course material and assignments

Instructor: Prof Wen-mei W. Hwu